Jeremy Steele looks at whether crowd-sourcing an assessment of player performance works as well as data or traditional forms of scouting

Have you ever heard of the “wisdom of the crowd”? It refers to the idea that a group of people can collectively make better decisions or predictions than any individual member of the group could on their own.

Back in 1906, at a country fair in Plymouth, 800 people tried to guess the weight of an ox. And you know what? The average of their guesses was only 1% off from the true weight. Pretty impressive, right?

Now, think about a trial by jury: that’s another example of the wisdom of the crowd at work. Instead of relying on just one or a few “experts” (like in a bench trial), a group of regular people come together to form an opinion.

But here’s the thing: the wisdom of the crowd only works if a few conditions are met. Firstly, everyone has to have their own opinion; no groupthink is allowed! Secondly, people need to be able to think independently (no coercion or suggestion). And thirdly, they should have some local knowledge or expertise.

Most importantly, though, there needs to be a way to aggregate all those opinions into one cohesive idea, or judgement, or analysis. Otherwise, you just end up with a bunch of people shouting at each other.

Author and finance journalist James Surowiecki’s 2004 book, The Wisdom of Crowds, posits that when the wisdom of the crowd is done right, it can outperform even the experts. But the four conditions noted above have to hold, otherwise any value of this method is lost.

The Great Scouting Debate

The debate on whether expert scouts or big data models are better at predicting a player’s success in football is a classic one. But what if we pooled the opinions of multiple scouts together? Can they outperform both big data and individual experts?

Individual experts have experience, but they are still just one person. Big data can analyze vast amounts of information and identify patterns that even the most experienced scout may miss.

When we bring together the opinions of multiple scouts, we can tap into the wisdom of the crowd. By pooling together different perspectives and areas of expertise, we can potentially create a more complete and accurate picture of a player’s potential.

So, who comes out on top in this great debate? While individual scouts and big data models have their strengths and weaknesses, the wisdom of the crowd has the potential to revolutionize the way we evaluate players. The goal is always the same: to identify the best players and build winning teams.

Experiment: Do Journalists’ Scores Meet the Wisdom of Crowds Criteria?

When it comes to scoring football players, who better to ask than the match day journalists? So, we took 40 issues of the Football League Paper in season 2016/17 and collected the match ratings from every reported match.

The match ratings usually look something like this:

But do their individual scores meet the criteria for the wisdom of crowds, as outlined by Surowiecki?

Let’s take a closer look. While the journalist pool may not be a perfect representation of society, there is certainly diversity of opinion among them. With different backgrounds and levels of playing experience, each journalist brings their own perspective to the game.

In terms of independent thinking, the criteria for scoring a player is not clearly defined. However, this gives journalists the freedom to offer their unfiltered opinions on players, taking into account the intangibles that data often misses, such as mental toughness, work ethic, and leadership qualities.

Local knowledge is also a strength of match day journalists, as they are reporting on games in their respective areas and have a deeper understanding of the teams and players involved.

But what about the fourth criteria, the aggregation mechanism? This is where things get a bit more complicated. While individual journalists may have their own opinions, it’s not clear how these scores are aggregated into a final rating.

So, do journalists’ scores meet the wisdom of crowds criteria? It’s hard to say for sure without knowing more about the aggregation process. However, the diversity of opinion and local knowledge among journalists certainly make them a valuable source of information when it comes to evaluating football players.

The Uncertainty surrounding Language and Consistency of Scoring

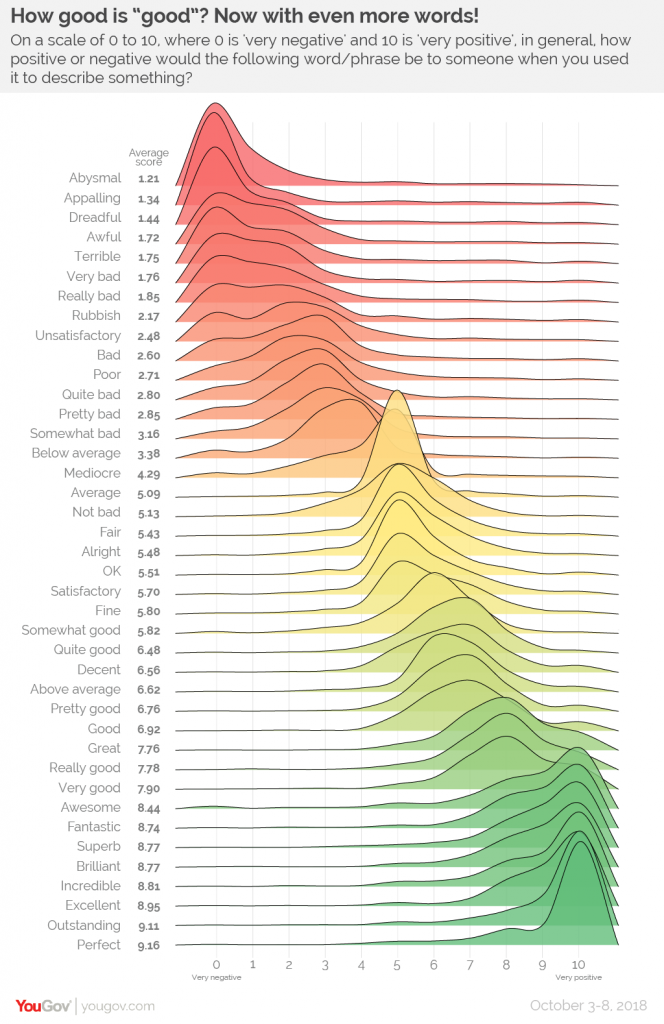

When it comes to rating football performance, there’s a lot of ambiguity around what constitutes a good or bad performance. How do we know what’s truly positive or negative language? According to a YouGov poll from October 2018, people’s opinions on what constitutes positive or negative language can vary widely.

This ambiguity can pose a problem for journalists tasked with reviewing football performances. While some publications may be lenient in their ratings, others, like L’Equipe, are notorious for being harsh critics. The daily edition of their paper is known for dishing out brutally honest match ratings.

One example of this is their coverage of the French national team’s World Cup win. L’Equipe didn’t hold back in their criticism, giving low ratings to some of the team’s star players.

L'Equipe's player ratings for France's World Cup win is still one the greatest things ever. https://t.co/ioeUqwud3Y pic.twitter.com/544OrHWsst

— harry (@harry_venison) February 16, 2021

But maybe this inconsistency isn’t necessarily a bad thing. Perhaps the broadness and openness of a “wisdom of the crowds” approach, where many people contribute to ratings without clear definitions or restrictions, is the best way to evaluate football performance. After all, opinions are subjective, and what one person deems a great performance may not be the same for another.

Results: Crowds vs. Experts

Determining the “real truth” can be challenging, as it often involves verifying information from multiple sources and considering different perspectives. However, there are some methods that can help in this process.

One way to compare the results of the Wisdom of Crowds approach to the “real world” is to collect data and evidence that support or contradict the findings of the approach.

For example, one approach is compare the results of the Wisdom of Crowds approach to expert opinions. In this case, we looked to compare the player ratings from our crowds approach to the official EFL Championship team of the season 2016/17. The results we interesting, to say the least.

THE WISDOM OF THE CROWDS RESULTS: CHAMPIONSHIP 2016/17 (MINIMUM 10 MATCHES)

TOP 10 GOALKEEPERS

| Player Name | Team | Matches | Ave. Rating |

| Frank Fielding | Bristol City | 19 | 6.74 |

| Joe Murphy | Huddersfield Town | 10 | 6.70 |

| Kelle Roos | Derby County | 12 | 6.67 |

| Adam Davies | Barnsley FC | 33 | 6.55 |

| Ali Al-Habsi | Reading FC | 33 | 6.55 |

TOP 10 FULL BACKS

| Player Name | Team | Matches | Ave. Rating |

| Charlie Mulgrew | Blackburn Rovers | 19 | 6.95 |

| Scott Malone | Fulham FC | 27 | 6.74 |

| Luke Ayling | Leeds United | 27 | 6.70 |

| Ryan Sessegnon | Fulham FC | 10 | 6.60 |

| Denis Odoi | Fulham FC | 16 | 6.56 |

TOP 10 CENTRE BACKS

| Player Name | Team | Matches | Ave. Rating |

| Pontus Jansson | Leeds United | 21 | 7.05 |

| Aden Flint | Bristol City | 31 | 6.87 |

| Ciaran Clark | Newcastle United | 19 | 6.79 |

| Lewis Dunk | Brighton & HA | 26 | 6.77 |

| Ethan Ebanks-Landell | Wolverhampton W | 19 | 6.74 |

TOP 10 CENTRE MIDFIELDER

| Player Name | Team | Matches | Ave. Rating |

| Aaron Mooy | Huddersfield Town | 28 | 7.21 |

| Craig Gardner | Birmingham City | 11 | 6.91 |

| Tom Cairney | Fulham FC | 31 | 6.90 |

| Gary O’Neil | Bristol City | 17 | 6.88 |

| Ryan Woods | Brentford FC | 29 | 6.86 |

TOP 10 WINGERS

| Player Name | Team | Matches | Ave. Rating |

| Jota | Brentford FC | 12 | 7.33 |

| Anthony Knockaert | Brighton & HA | 25 | 7.04 |

| Aiden McGeady | Everton FC | 24 | 6.88 |

| Jacques Maghoma | Birmingham City | 13 | 6.85 |

| Hélder Costa | Wolverhampton W | 25 | 6.84 |

TOP 10 CENTRE FORWARDS

| Player Name | Team | Matches | Ave. Rating |

| Glenn Murray | Brighton & HA | 26 | 6.65 |

| Sammy Ameobi | Newcastle United | 14 | 6.64 |

| Britt Assombalonga | Nottingham Forest | 21 | 6.62 |

| David McGoldrick | Ipswich Town | 22 | 6.59 |

| Jonathan Kodjia | Aston Villa | 24 | 6.58 |

We looked at the top five players in each position from the Wisdom of the Crowds and compared them to the official selection. And it turns out that six of the 11 players in the official team of the season were in the top 5 of their position in the Wisdom of the Crowds.

The official team featured some solid picks from our WOTC selections including Dunk, Jansson, Mooy, and Knockaert, but there were also some notable omissions.

Frank Fielding, who had an average rating of 6.74, was the highest-rated goalkeeper, but he didn’t make the official team, with David Stockdale (who didn’t even appear in the top 10 if the WOTC rankings!) being preferred.

Similarly, Charlie Mulgrew was the highest-rated full-back with an average rating of 6.95, yet he was snubbed in favour of Scott Malone.

The official team of the season centre forwards were Chris Wood, Glenn Murray, and Dwight Gayle, while the top 3 centre forwards in the Wisdom of the Crowds were Glenn Murray, Sammy Ameobi, and Britt Assombalonga.

It is worth mentioning sample size. The highest ranked player in the Wisdom of the Crowds, Jota, who received 7.33 only appeared in 12 matches and was also not selected. The same probably applies to Ameobi or Gardner, for example, who also only just broke into double figures. Aggregating individual match performances can give a high rating, but this would not be reflected in a team of the season, for example, for obvious reasons. And this is a useful reminder: when data scouting, for example, filtering by minutes played allows us to avoid players who have shone but in limited minutes (and, indeed, minutes played by age is a pretty strong indicator of quality in itself).

Final Thoughts

When it comes to scouting players, then, the wisdom of the crowd could be a game-changer. By pooling together different perspectives and areas of expertise, we can potentially create a more complete and accurate picture of a player’s potential. The approach is also highly scalable and flexible. While individual scouts and big data models have their strengths and weaknesses, the diversity of opinion and knowledge base makes a crowd-led approach a valuable source of information.

However, there is inherent uncertainty surrounding language and consistency of scoring, and what one person deems a great performance may not be the same for another. Ultimately, the best approach may be a combination of multiple sources, including individual experts, big data models, and the wisdom of the crowd.